Projects

AI agent platforms, developer tools, foundation model research, and ML pipelines.

AI agent platform with four activation paths (LLM config, Python class, graph pipeline, A2A delegation), isolated service contexts per activation, real-time WebSocket streaming, auto-generated data models and CRUD APIs, and pluggable worker backends (Local, Celery, RabbitMQ, SQS, Redis, External).

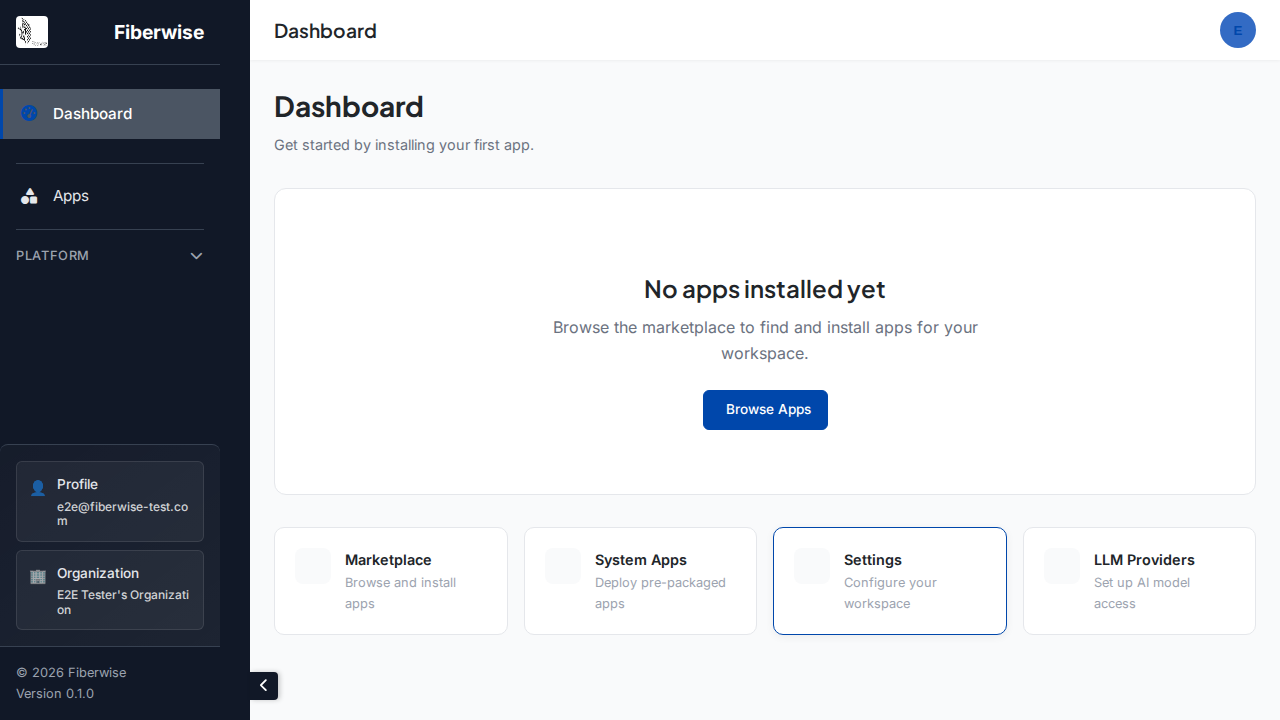

Dashboard

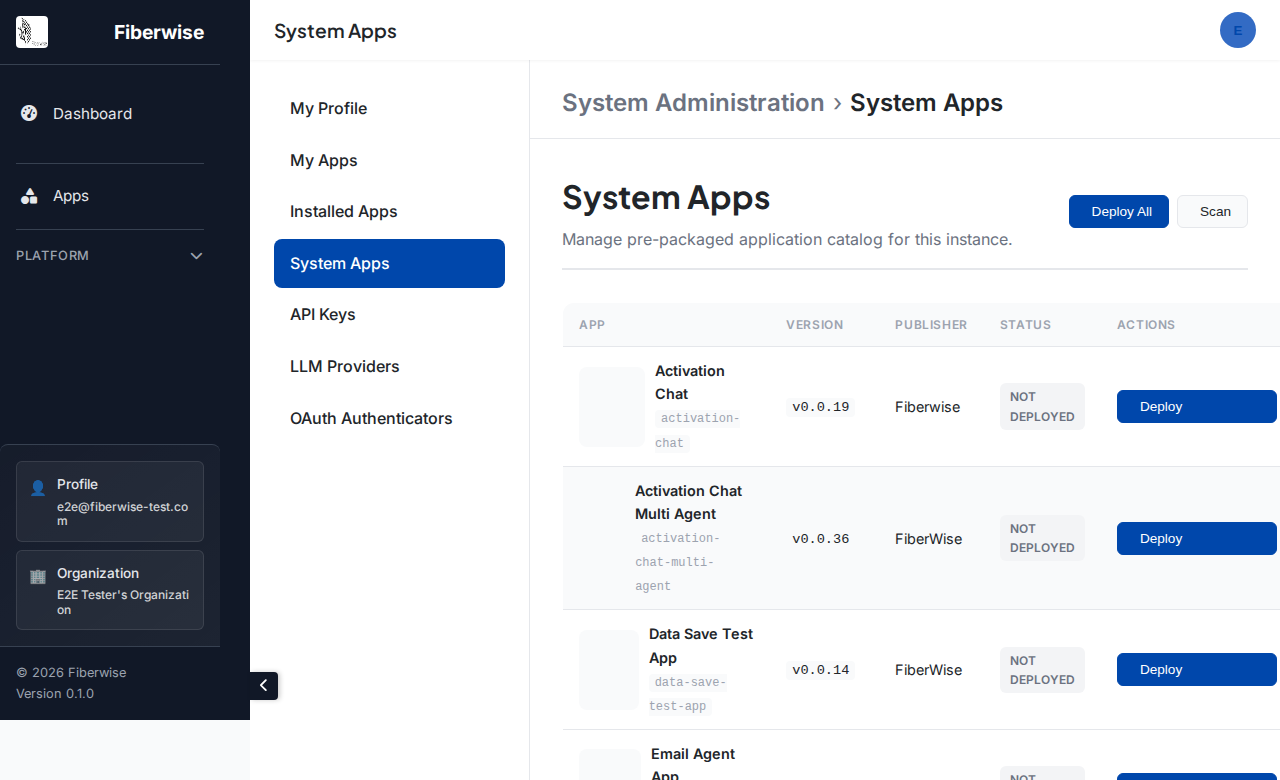

System Apps

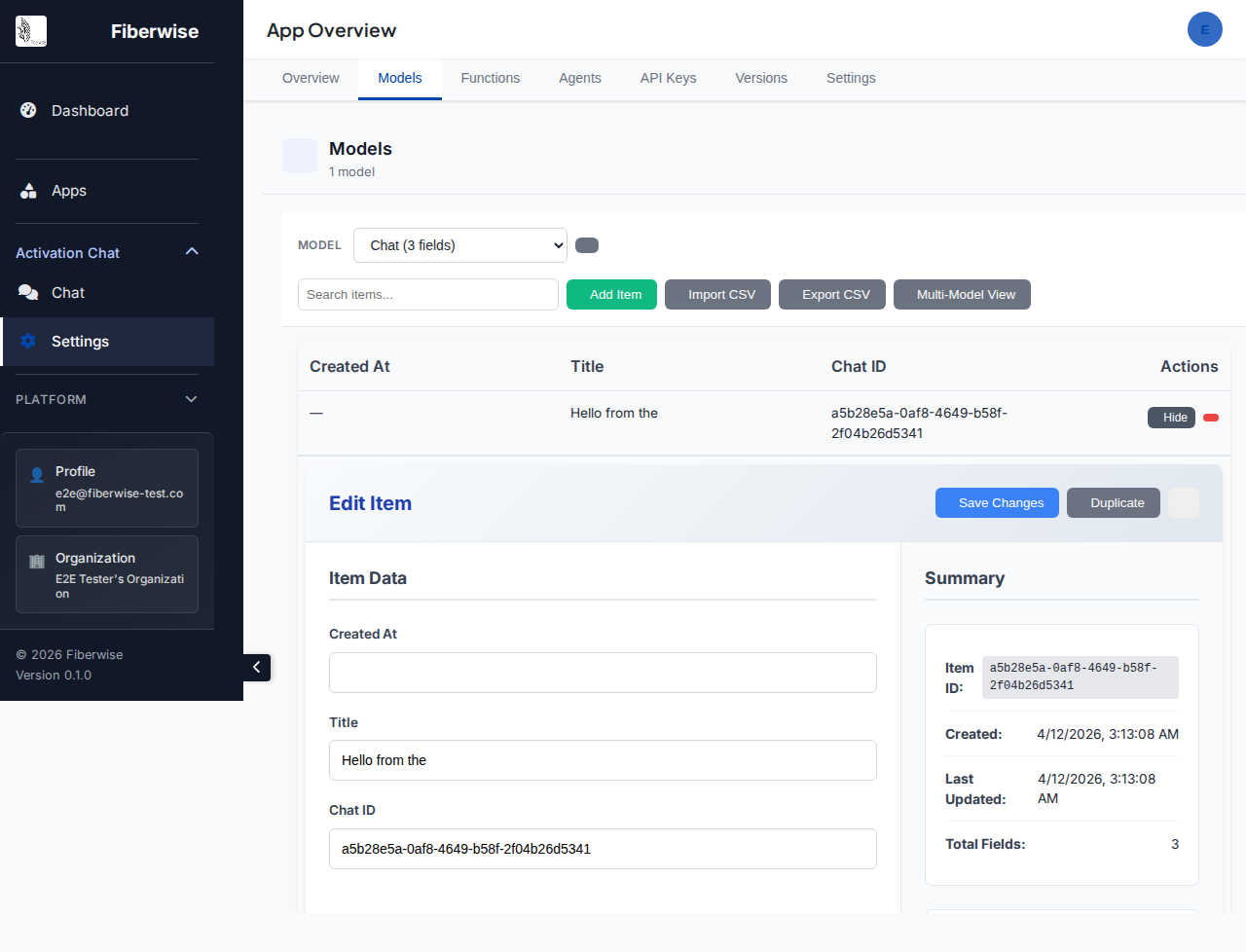

App Models

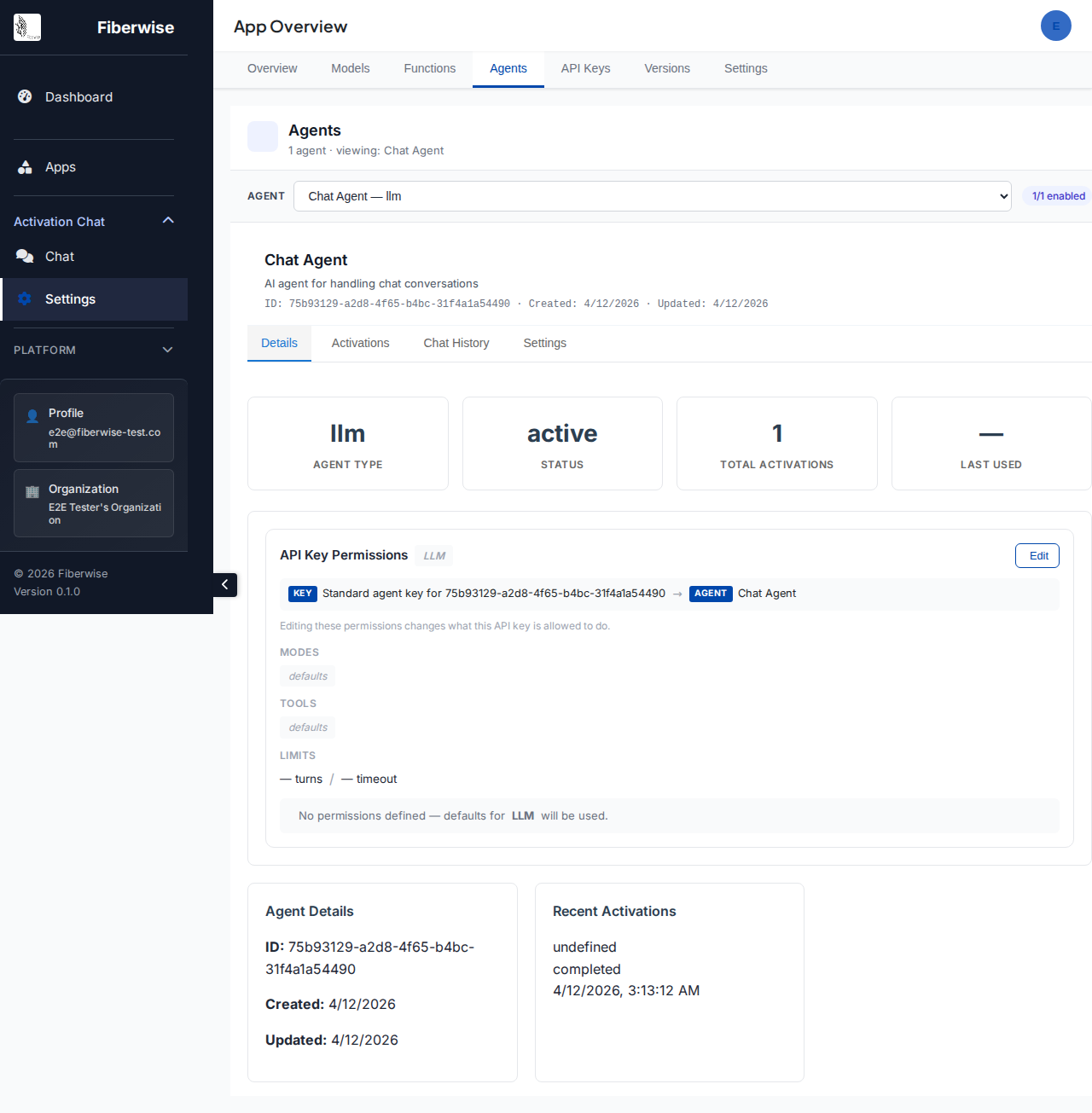

Agent Permissions

Shared library every Fiberwise component depends on. Agent base class supporting Python class, plain function, or config-only LLM agents. JSON schema validation for inputs/outputs. Full service layer: user management, API keys, OAuth, LLM providers (OpenAI, Anthropic, Google AI, Ollama), telemetry, email, scheduling, circuit breakers, and file storage. Database abstraction via NexusQL.

Command-line tool for building, deploying, and running Fiberwise apps. Auto-detects agents and pipelines from Python files. Four execution modes: sequential, parallel, piped chain, or multi-turn conversation. One command starts the full local dev stack. Deploy with automatic version bumping, manage apps across multiple instances.

Client library for browser and server-side apps on the Fiberwise platform. Real-time SSE streaming with partial results. Auto-detects browser vs Node.js. Built-in app router, WebSocket with auto-reconnection and message queueing, and activation history queries with filters and pagination.

Client library for Python apps and scripts. Async iterator streaming with message buffering. Works in async and sync Python. Same SDK calls for local database or remote API. Built-in email and file storage abstractions. Runtime LLM provider configuration. Package and deploy AI models as versioned Fiberwise apps.

Reference apps that ship with the platform. Chat apps with full activation-based message history. Multi-agent conversations with message editing and branching. Email agent with OAuth (Gmail, Outlook, Yahoo) and AI analysis. Event-driven pipelines with retry, timeout, and role-based manual triggers. Each app fully declared in one YAML manifest.

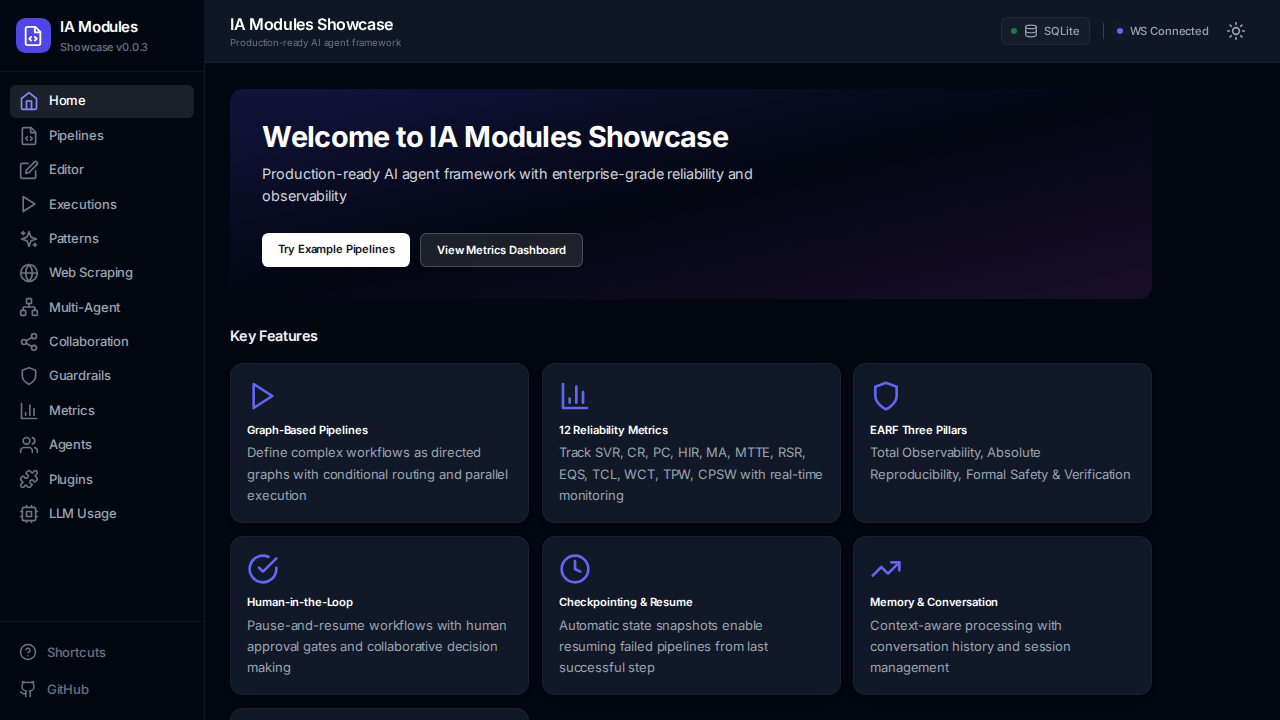

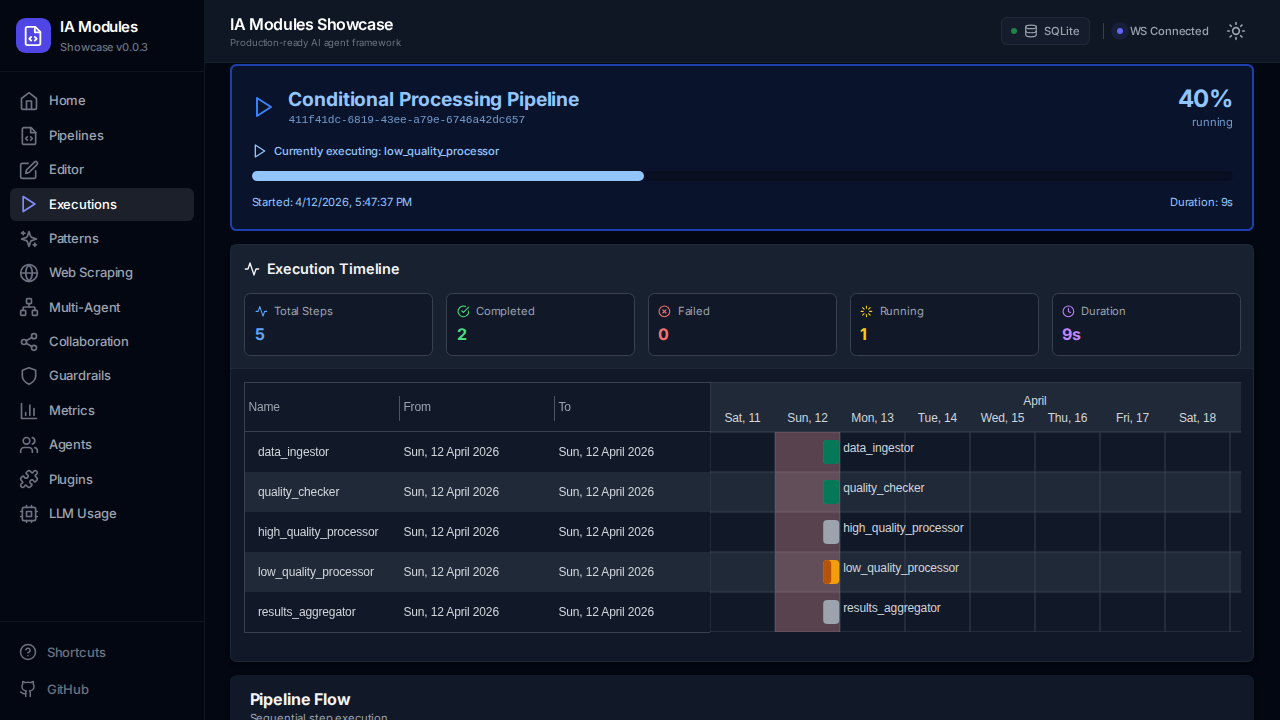

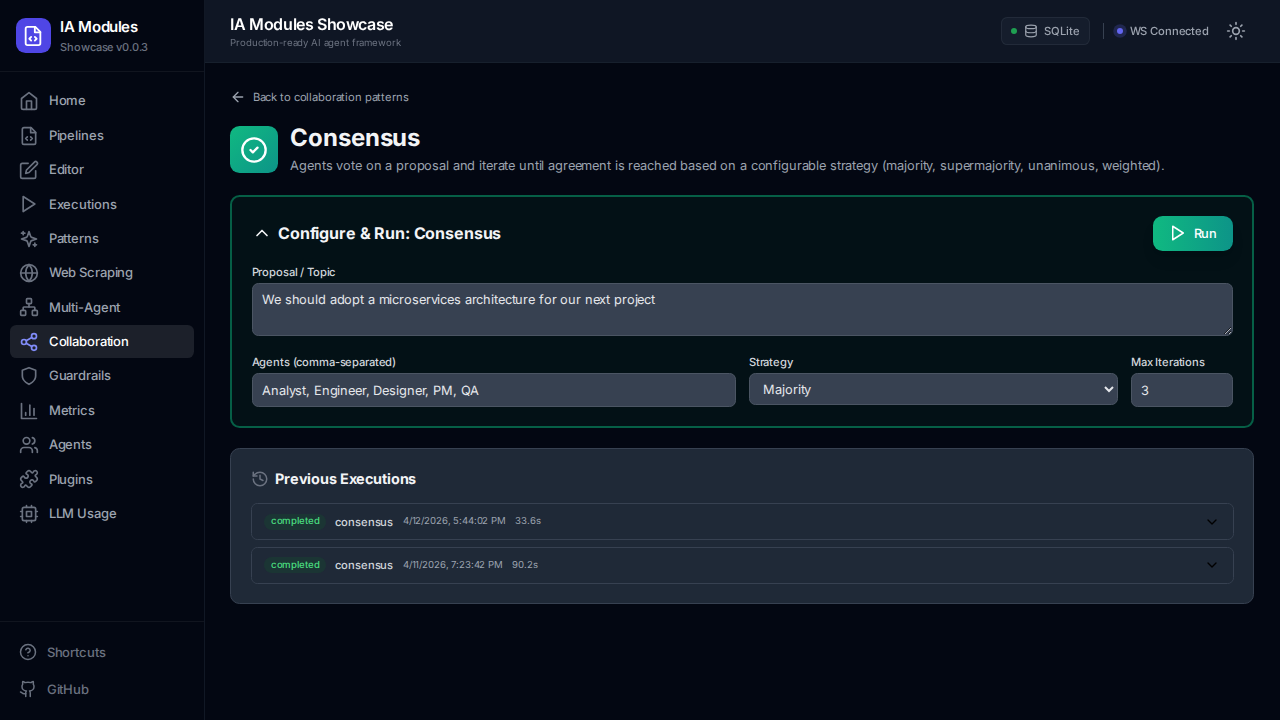

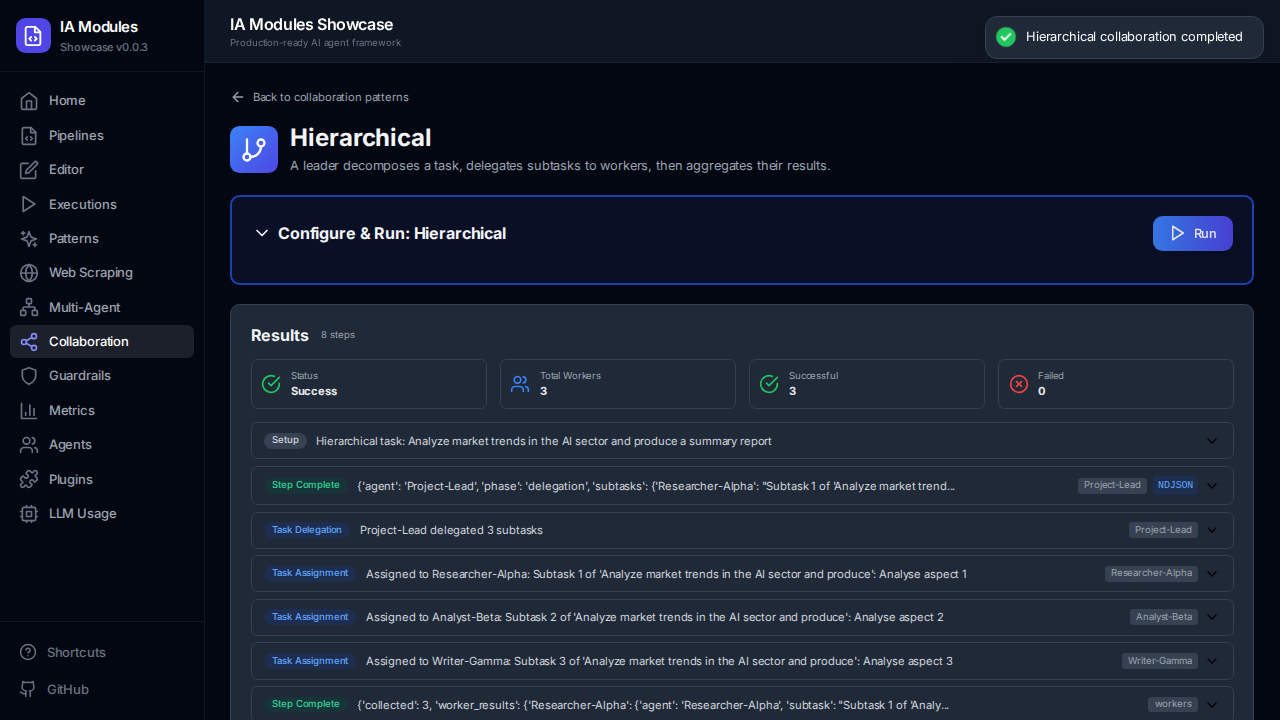

Python framework for building AI agent pipelines with graph-based execution and multi-agent coordination. 6 step types (LLM, Function, Agent, A2A, Parallel, Orchestrator) with topological resolution and auto-parallelization. Zero-trust JWT permissions, orchestrator patterns (consensus, debate, hierarchical delegation), conditional routing, checkpointing, and pluggable identity providers.

Showcase Home

Execution Timeline

Consensus

Hierarchical

Universal data access layer — write SQL once and run it on SQLite, PostgreSQL, MySQL, or MSSQL. Auto-translates PostgreSQL DDL to other dialects. Parameter syntax auto-translated per dialect. Migration runner that tracks schema versions across all supported databases.

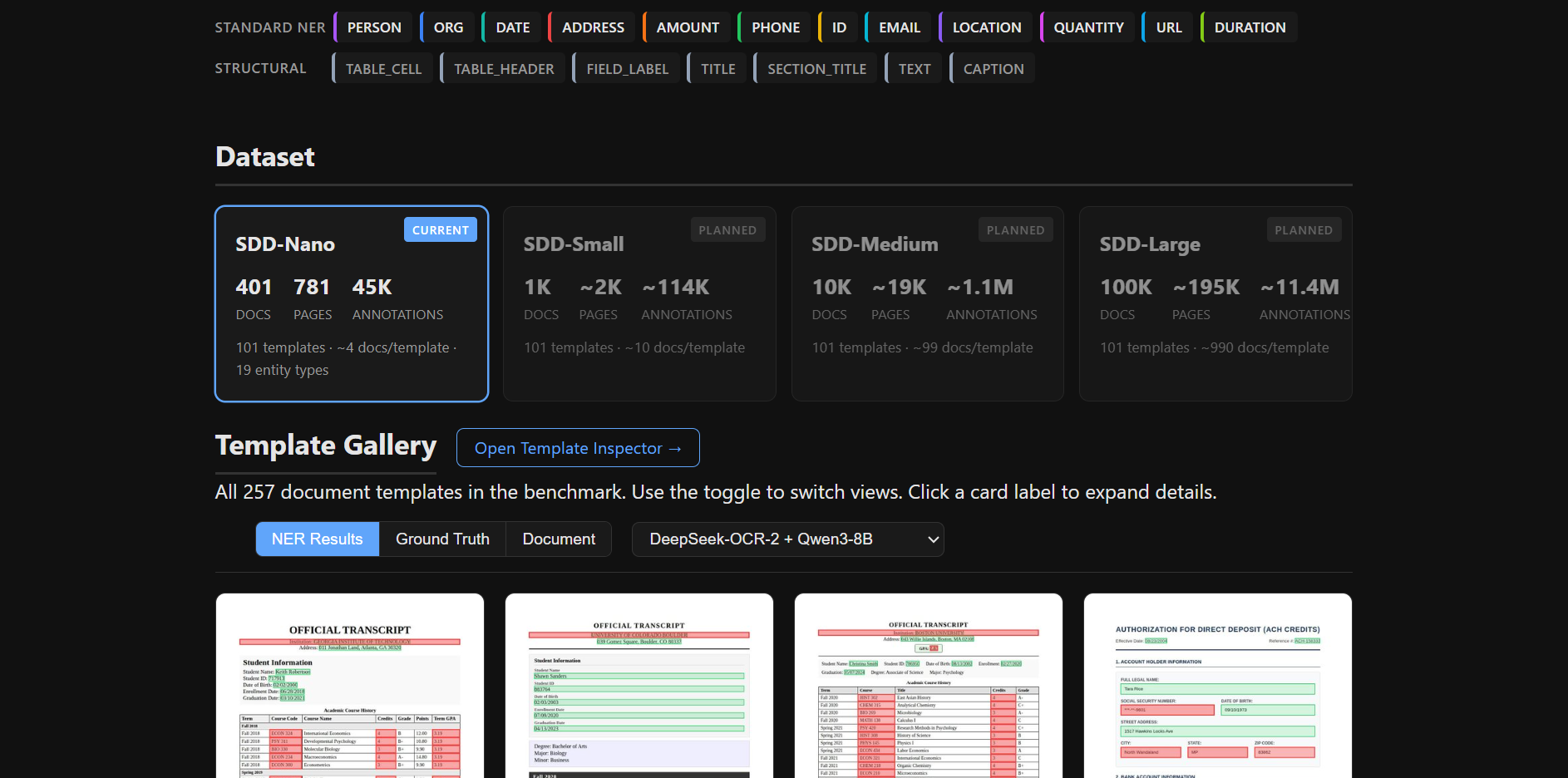

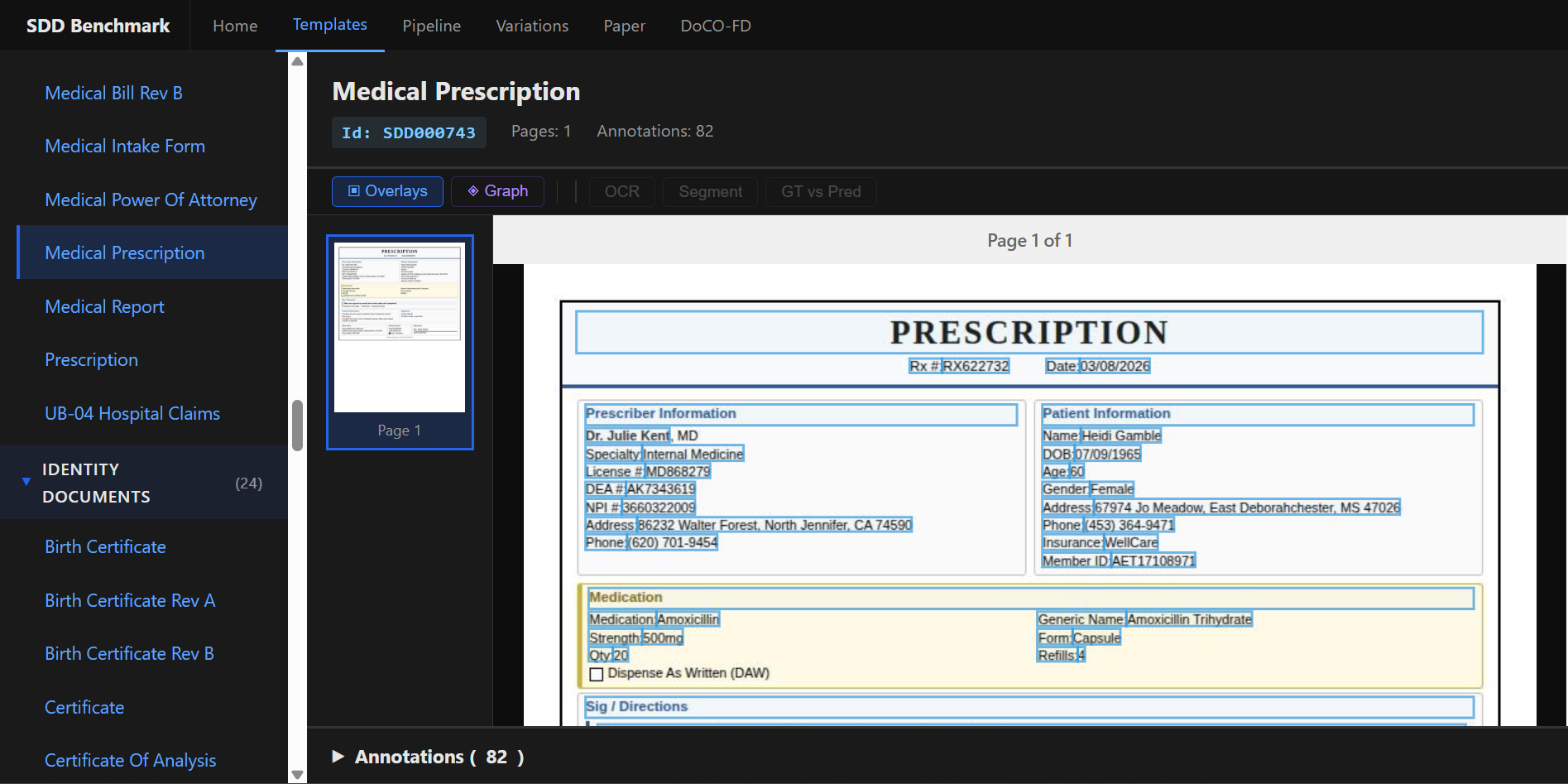

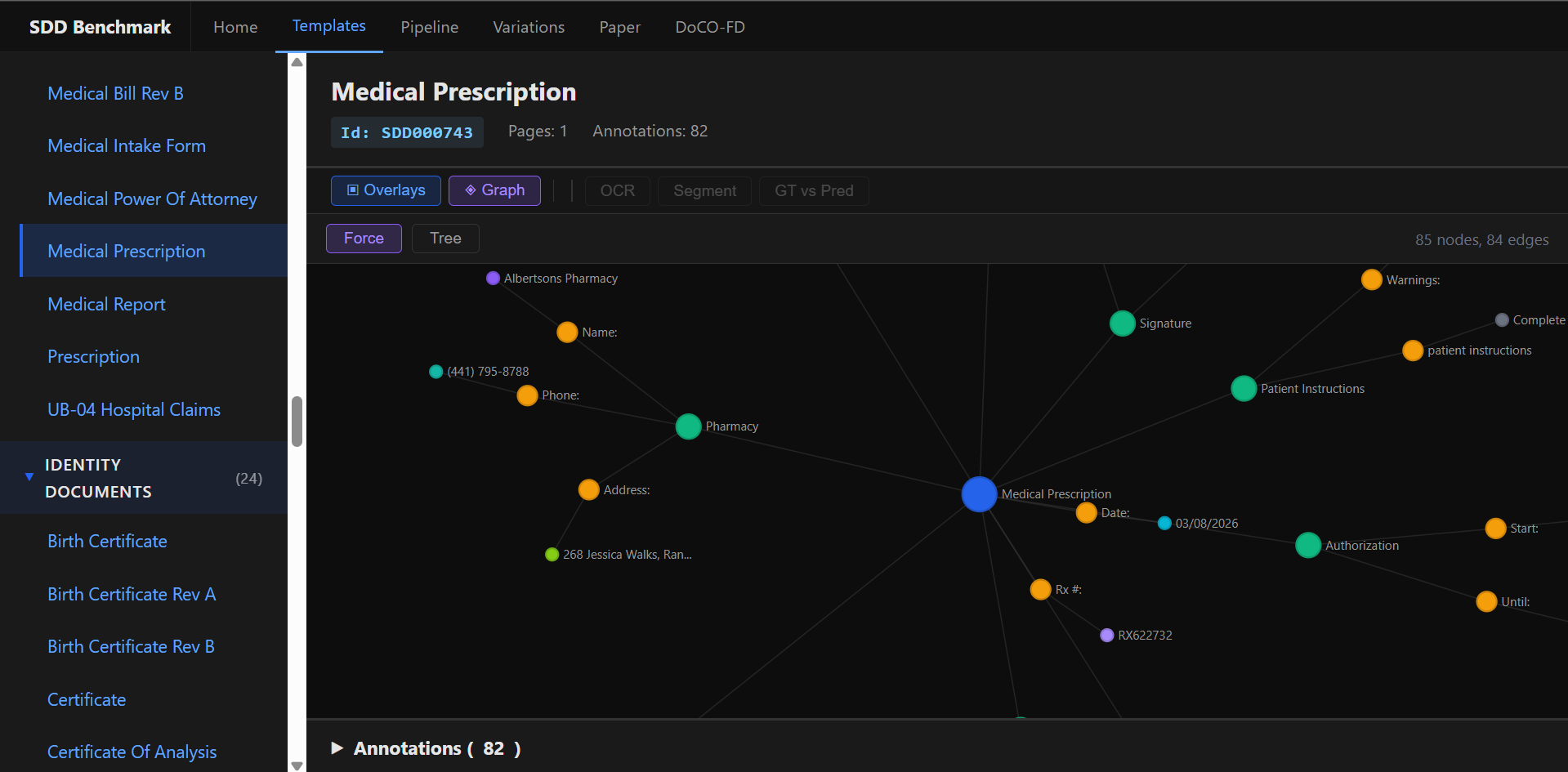

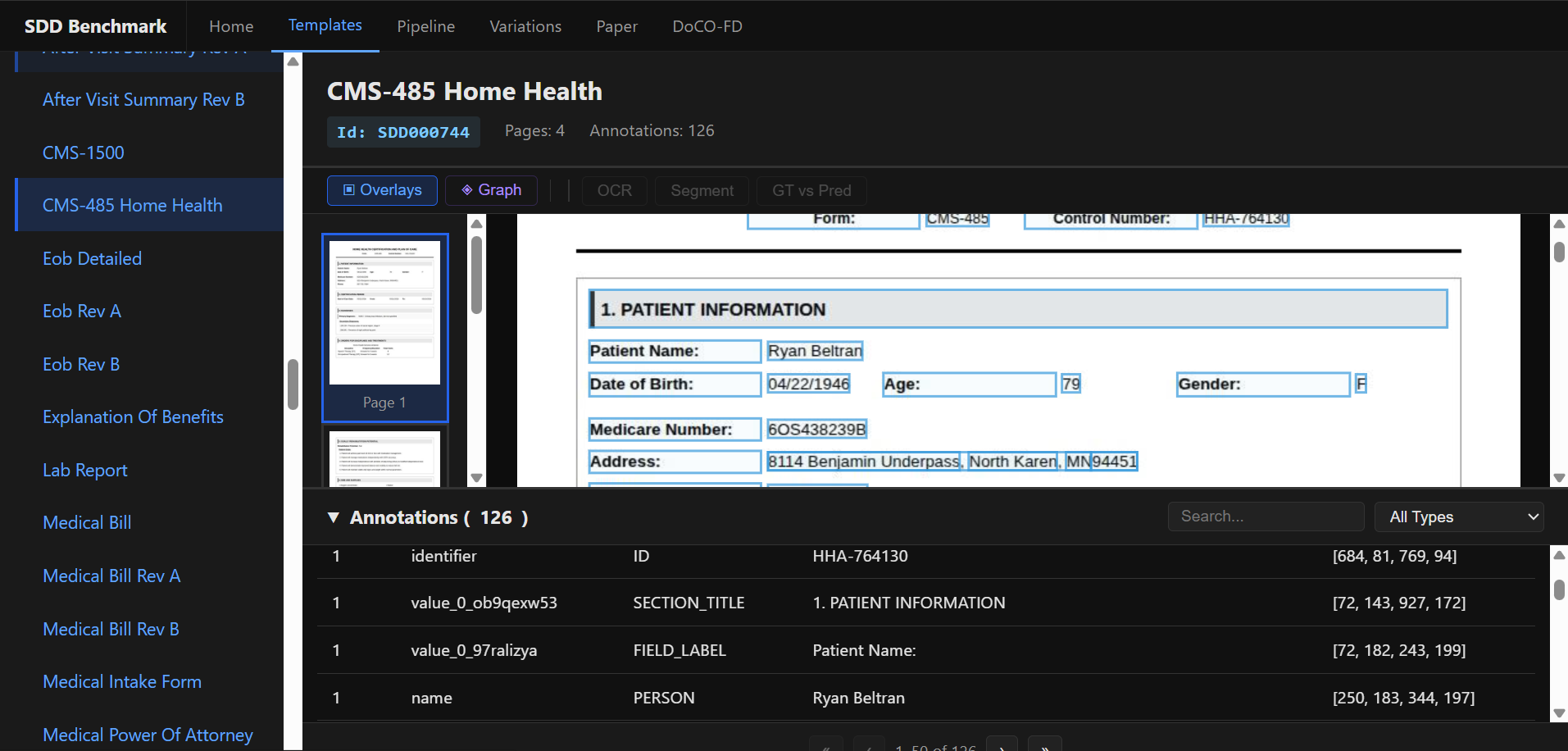

SDD Benchmark Site

Synthetic Document Dataset — end-to-end pipeline from template-based document generation through fine-tuning to public benchmark evaluation. 14 entity types across 275+ templates with annotations in JSON, JSON-LD, and Turtle (RDF). Fine-tuning pipeline supporting 8 VLM configs with SFT and GRPO reinforcement learning. Four benchmark tracks with bootstrap significance tests.

Dataset Explorer

Template Inspector

Knowledge Graph

Annotations

Geometric Rotation Transformer — a novel transformer architecture where all weights are learned Givens rotations on SO(n), replacing standard linear layers entirely. Butterfly-shuffled rotation stages with content-dependent angle modulation via Clifford/Stokes coupling. Mixture of Rotations FFN replaces the standard MLP feedforward. Custom CUDA kernels.

MatterCraft

AI consulting — translates MatterWave research into phased AI implementations for businesses.

Matter Craft Tech Website

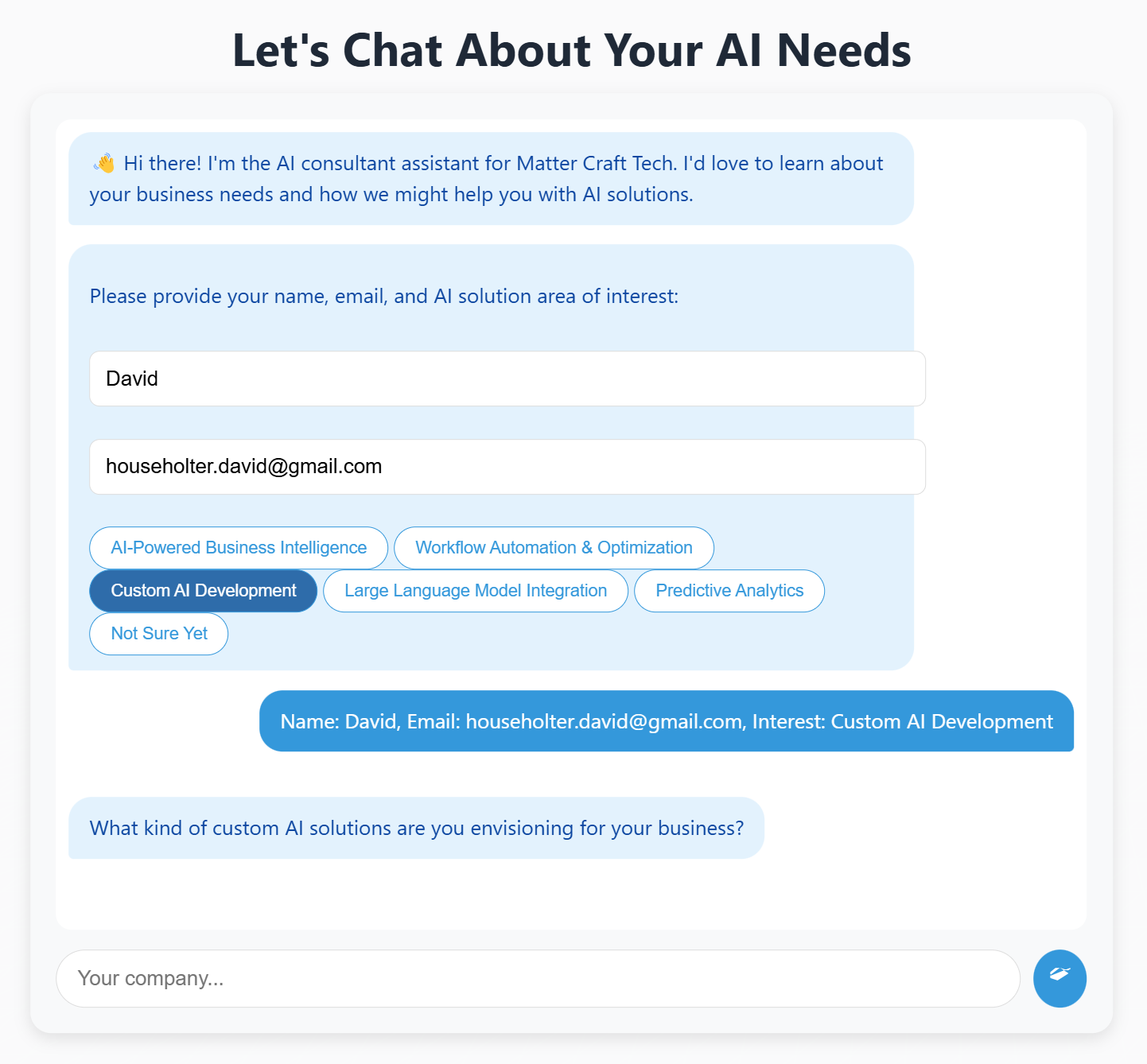

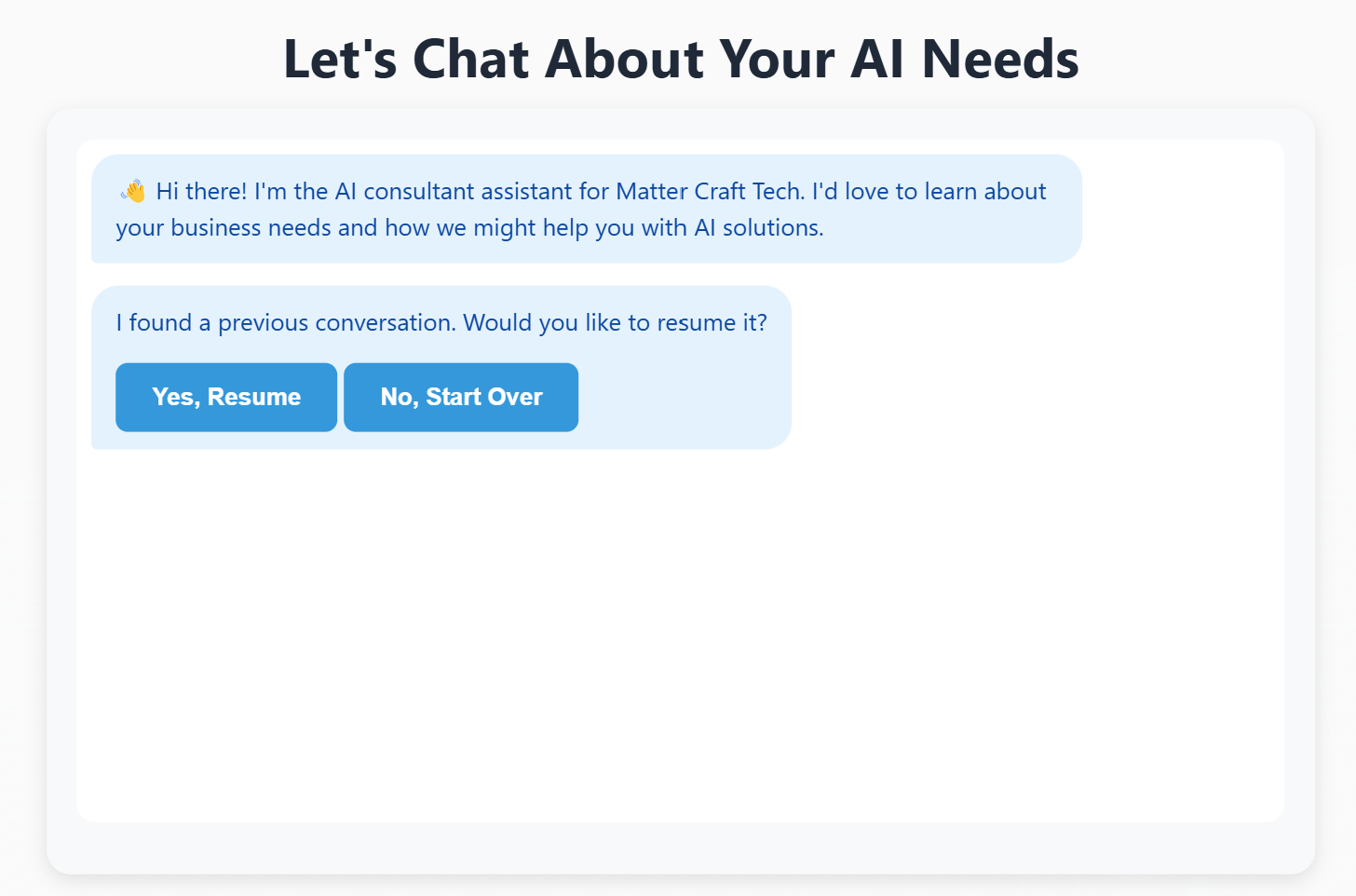

Custom website with an interactive contact form powered by a bespoke chat component. Dynamic question branching based on user input, persistent chat history, backend API integration, and robust error handling. Built with HTML, WebGL, JavaScript, Tailwind CSS, and FastAPI.

Homepage

Chat Interface

Chat Interaction